Why Standard Math Tests Fail Neurodivergent Kids? (And Alternatives to Look At)

TLDR: Standard math tests don't measure what neurodivergent kids actually know. Research shows these assessments largely test processing speed, working memory capacity, and anxiety management — not mathematical understanding. Children with ADHD, autism, dyscalculia, and dyslexia consistently score below their true ability because the format of the test creates barriers the test was never designed to account for. The good news? Better approaches exist: dynamic assessment, curriculum-based measurement, game-based adaptive tools, and Universal Design for Learning can reveal what your child truly understands. This article breaks down the research and gives you concrete alternatives to advocate for.

Your child comes home with another low math score. You've watched them solve problems at the kitchen table. You know they understand more than that number suggests. So what's going on?

If your child is neurodivergent — whether they have ADHD, autism, dyscalculia, dyslexia, or a combination — there's a growing body of peer-reviewed research that says the problem isn't your child. It's the test.

Standard math assessments were designed for a neurotypical brain. They assume a child can manage time pressure, filter out distractions, hold multiple pieces of information in working memory, regulate anxiety in a high-stakes environment, and demonstrate knowledge in one rigid format — all at the same time. For many neurodivergent children, those assumptions don't hold. And when the assumptions break down, the test stops measuring math and starts measuring something else entirely.

Let's look at what the research actually says — and what you can do about it.

What Standard Math Tests Are Really Measuring

Speed, Not Understanding

Here's something that might surprise you: when your child with ADHD takes a timed math test, their score may reflect how fast their brain processes information — not whether they understand the math.

A 2024 study in the Journal of Attention Disorders found that children with ADHD performed significantly worse across every type of processing speed measure compared to their peers. More importantly, cognitive processing speed was a direct predictor of math fluency scores. In other words, the "fluency" these tests claim to measure is really just speed — and speed is exactly where ADHD brains are at a disadvantage.

This isn't a new finding. Researchers have known for over a decade that timed math tests can trigger math anxiety across all achievement levels (Boaler, 2014). What makes this especially concerning is that anxiety under time pressure hits hardest in students with high working memory — the very kids with the most mathematical potential. The stress of a ticking clock blocks access to the working memory they need to solve problems, creating a gap between what they know and what the test captures.

And if you're thinking "well, maybe extra time would fix it" — the research is less encouraging than you'd hope. A study of students in grades 5–7 found that while extended time helped everyone perform better, it didn't specifically close the gap for students with ADHD (Lewandowski et al., 2007). Both groups improved equally, which means the timed format disadvantages ADHD students, but simply adding minutes doesn't address the underlying cognitive barriers.

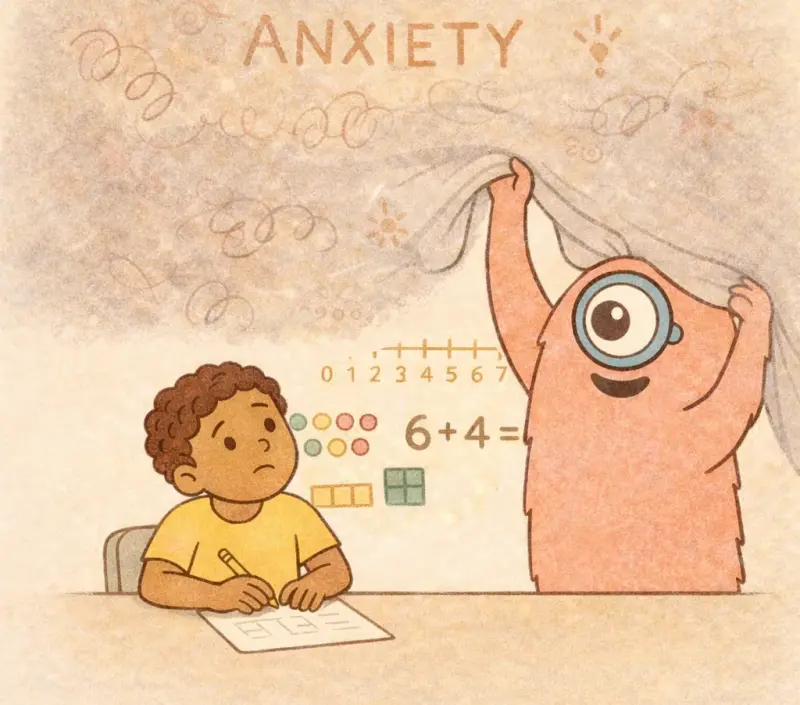

Anxiety That Goes Deeper Than Nerves

Every child can feel nervous before a test. But for neurodivergent children, math anxiety operates at a fundamentally different level. Research on children with dyscalculia found that they respond to math-related words as though they are negative emotional stimuli — their brains treat math vocabulary the way most people's brains treat threatening words (Rubinsten & Tannock, 2010). This isn't test-day jitters. It's a deep, automatic response that activates before the child has even started calculating.

What makes this worse is that dyscalculia and math anxiety don't just "add up" — they attack different cognitive pathways simultaneously. Dyscalculia disrupts visuospatial working memory (the ability to mentally picture and manipulate numbers), while math anxiety disrupts verbal working memory (the ability to hold instructions and sequences in mind). A standard test can't tell the difference between these two problems, which means a child's score tells you almost nothing about why they struggled — and any support based on that score alone is likely to miss the mark (Mammarella et al., 2015).

A massive meta-analysis of over 906,000 participants confirmed what many parents already sense: timed, high-stakes, performance-based formats amplify anxiety's negative effects more than any other type of assessment (Caviola et al., 2022). If you've ever noticed your child can do math calmly at home but falls apart on test day, this is likely why — and it's not something they can simply "push through."

Recent research has added an important nuance: a 2025 study found that for children with dyscalculia specifically, the cognitive patterns that predict math performance in neurotypical children simply don't apply (Lievore, Caviola, & Mammarella, 2025). The assessment models were built on neurotypical norms, so they fundamentally misread how dyscalculic children think and perform.

The Working Memory Bottleneck

Many standard math tests — especially word problems and multi-step calculations — place enormous demands on working memory. For neurodivergent children, this creates an invisible barrier that has nothing to do with mathematical ability.

A review from Stanford University made the case clearly: working memory is critically involved in math learning, and its disruption is a core feature of dyscalculia (Menon, 2016). Children with dyscalculia may genuinely understand a concept but fail because the test format overwhelms their ability to hold and manipulate information — not because they lack the mathematical knowledge.

Multiple-choice formats create an additional trap. Research has shown that children with math learning difficulties specifically struggle with inhibitory control — the ability to suppress irrelevant information (Passolunghi & Siegel, 2004). When a test presents four answer choices, the incorrect options become cognitive noise that these children can't filter out. Their core deficit is in filtering, not in mathematics.

Perhaps most telling: research has found that math anxiety specifically reduces visuospatial working memory during math tasks — but not in other contexts (Passolunghi et al., 2016). The testing situation itself degrades the very cognitive resources children need to perform. It's a catch-22: the test creates the conditions that guarantee failure.

If your child's challenges include working memory difficulties alongside ADHD or dyscalculia, understanding this bottleneck is the first step toward advocating for assessment approaches that don't penalize their cognitive profile.

Sensory Overload Silently Tanks Scores

For autistic children, there's another layer that standard tests completely ignore: the sensory environment.

A landmark study published in the American Journal of Occupational Therapy found something remarkable: in autistic children with average-range IQ, sensory processing scores explained 47% of the variance in academic performance — while estimated intelligence was not a significant predictor (Ashburner, Ziviani, & Rodger, 2008). Read that again. Nearly half of what determines an autistic child's academic score isn't how smart they are — it's how their sensory system handles the testing environment.

Fluorescent lighting, the scratch of pencils around the room, an uncomfortable chair, the hum of an air conditioner — for a child with sensory processing differences, these aren't minor annoyances. They're cognitive drains that leave fewer resources available for actual math.

Further research on autistic youth aged 8–14 found that the children who fare worst are those who are highly sensitive to sensory input but unable to engage avoidance behaviors during testing (Butera et al., 2020). They're overwhelmed but can't escape — and their scores reflect that overwhelm, not their ability.

Neurodivergent Kids Know More Than Their Scores Show

The gap between what neurodivergent children actually know and what standardized tests capture isn't a theory — it's been measured directly.

A study of high-functioning 9-year-olds with autism found that 90% showed significant discrepancies between their IQ scores and academic achievement (Estes et al., 2011). About 60% underperformed relative to their IQ in at least one area, while an equal number overperformed in another. Standardized tests simply cannot capture the "spiky" cognitive profiles that are characteristic of neurodivergence.

Even more striking: a neuroimaging study published in Biological Psychiatry found that autistic children actually outperformed IQ-matched neurotypical peers on numerical problem-solving and used more sophisticated decomposition strategies (Iuculano et al., 2014). Their brains organized mathematical information differently — and more effectively — than standard assessments could detect. The gap between math achievement scores and IQ in the autism group provided neural-level evidence that these tests are missing real mathematical strength.

If your child has dyscalculia, the disconnect between test scores and understanding may feel especially familiar. Recognizing the signs of dyscalculia through behavioral and cognitive patterns — rather than relying solely on standardized scores — can give you a much more accurate picture of your child's needs.

Why Standard Accommodations Often Fall Short

If standard tests are the problem, can't we just fix them with accommodations? The research here is sobering.

A study examining five common testing accommodations for elementary and middle school students with ADHD — extended time, frequent breaks, reduced-distraction environments, oral presentation, and calculator use — found no evidence of effectiveness on standardized math or reading scores (Pritchard et al., 2016). Calculator use was actually associated with worse math scores in elementary students.

A broader systematic review published in 2021 confirmed this pattern across a much larger evidence base: most accommodations, including extended time, fail to show specific benefits for students with ADHD (Lovett & Nelson, 2021). The one exception was read-aloud accommodations, which showed specific benefits for younger students in two randomized experiments.

This doesn't mean accommodations are useless — it means that the accommodations in most IEPs aren't targeting the right problems. When the test format itself is the barrier, tweaking the conditions of that same test can only do so much.

What to Look at Instead

So if standard tests don't work, what does? Fortunately, researchers have been developing and testing alternatives for years. Here are the approaches with the strongest evidence behind them.

Dynamic Assessment: Testing How Kids Learn, Not Just What They Know

Traditional tests take a snapshot: can the child solve this problem right now, under these conditions? Dynamic assessment takes a fundamentally different approach. It measures how a child responds to instruction — how quickly they learn, what kind of support helps, and what their potential is, not just their current performance.

Research published in the Journal of Educational Psychology found that dynamic assessment captured an entirely separate dimension of mathematical ability that static tests miss (Fuchs et al., 2008). It uniquely predicted how third graders would develop as math problem-solvers, even after accounting for language ability, nonverbal reasoning, attention, and prior math skills. For children with learning disabilities, this is transformative — it reveals learning potential that standard tests don't.

Dynamic assessment also solves a practical problem that plagues standardized testing: false positives. A follow-up study found that adding dynamic assessment as a second screening step after a standard math test significantly reduced the number of children incorrectly flagged as having a learning disability (Fuchs et al., 2011). Standard screening alone wrongly identifies too many children as at-risk. Dynamic assessment helps distinguish between children who performed poorly due to limited learning opportunities and those with genuine learning differences.

For a hands-on model, researchers have developed Mathematics Dynamic Assessment (MDA), which combines interest assessment, concrete-to-abstract evaluation, error pattern analysis, and flexible interviews (Allsopp et al., 2008). Instead of just marking answers right or wrong, MDA reveals how a child thinks mathematically — making it especially valuable for neurodivergent learners whose errors often follow meaningful, instructive patterns.

Curriculum-Based Measurement: Small, Frequent Check-Ins That Actually Help

Instead of one high-stakes test every few months, curriculum-based measurement (CBM) uses brief, frequent assessments — often just a few minutes — to track progress over time. Think of it as checking your child's growth chart at every pediatrician visit rather than measuring them once a year.

A comprehensive research review found that when teachers used CBM to guide instruction, students showed significant achievement gains in mathematics (Stecker, Fuchs, & Fuchs, 2005). For students with disabilities specifically, the gains were linked to teachers using systematic decision rules and skills analysis feedback — not just collecting data, but acting on it in targeted ways.

CBM is especially powerful for neurodivergent children because it removes many of the barriers that make standardized tests unreliable: the assessments are brief (reducing anxiety and fatigue), familiar (reducing novelty stress), and focused on specific skills (reducing working memory demands). And because they happen frequently, a single bad day doesn't define a child's trajectory.

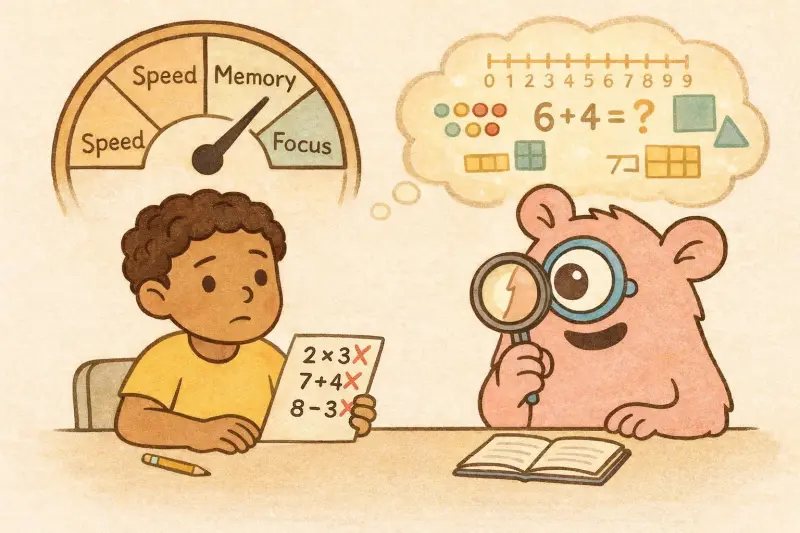

Game-Based and Adaptive Tools: Assessment That Doesn't Feel Like a Test

One of the most promising developments is the use of game-based, adaptive tools that assess mathematical understanding while children are engaged in play — removing test anxiety from the equation entirely.

A pioneering study published in Behavioral and Brain Functions tested an adaptive computer game called "The Number Race" with children aged 7–9 who had dyscalculia (Wilson et al., 2006). After just five weeks, children showed improvements in number comparison speed and subtraction accuracy. Crucially, the game's adaptive algorithm kept children at roughly 75% accuracy — challenging enough to learn, easy enough to stay motivated. For children with co-occurring ADHD, this balance between challenge and achievability is essential.

A systematic review of 96 studies on game-based learning for learners with disabilities confirmed that game-based approaches can embed assessment within gameplay, reducing anxiety while capturing abilities that traditional tests miss (Tlili et al., 2022). The review noted that using games specifically as assessment tools — not just instructional ones — is still an emerging area with significant room for growth. Games like Monster Math combine learning with assessment for precisely this reason.

Computer-Adaptive Testing: Promising, With Caveats

Computer-adaptive tests (CATs) adjust difficulty in real time based on a child's responses. Get a question right, and the next one is harder. Get it wrong, and the test recalibrates. In theory, this should be ideal for neurodivergent learners — no child sits through questions that are too easy or too hard.

A 2024 simulation study using data from 709 third-graders found that students with special educational needs were actually assessed with fewer items, reduced bias, and higher accuracy compared to students without disabilities (Ebenbeck & Gebhardt, 2024). That's encouraging.

But there's a catch. A review of CAT research cautioned that students with math-specific learning disabilities often have "spiky" profiles — they might struggle with basic computation but excel at higher-level reasoning (Stone & Davey, 2011). Adaptive algorithms that lower difficulty after a computation error might never present the conceptual questions where these children shine, effectively burying their strengths.

Universal Design for Learning: Building Better Tests From the Start

Rather than accommodating for problems after the fact, Universal Design for Learning (UDL) aims to build assessments that work for diverse learners from the outset — multiple formats, flexible tools, and varied ways to demonstrate understanding.

A 2025 study analyzing national math assessment data found that when students with disabilities had access to UDL features like digital pencils and elimination tools, their math performance improved meaningfully (Wei, 2025). Students with disabilities predominantly used text-to-speech features, while higher achievers gravitated toward digital annotation tools — suggesting that different learners benefit from different supports, and a one-size-fits-all test inherently disadvantages some.

However, UDL isn't just about adding tools. Research on universally designed math courses found that while accessibility improved, disabled students still felt disconnected from their peer group (Nieminen & Pesonen, 2020). True inclusive assessment needs to go beyond removing barriers — it should actively build mathematical identity and belonging.

What Parents and Teachers Can Do Right Now

You don't need to wait for the education system to catch up. Here are practical steps you can take today.

For parents: Ask your child's teacher or school psychologist how math understanding is being assessed beyond standardized tests. Request to see examples of your child's mathematical thinking — not just scores. If your child has an IEP, advocate for assessment approaches that account for processing speed, working memory, and anxiety. And if your child's test scores don't match what you see at home, trust your observation — the research is on your side.

For teachers: Consider supplementing standardized assessments with curriculum-based measurement to track progress over time. Use error analysis to understand how students are thinking, not just whether they got the right answer. Where possible, offer multiple ways for students to demonstrate understanding — verbal explanations, visual representations, or hands-on problem-solving alongside written tests.

For everyone: Remember that the goal of assessment is to understand what a child knows so you can help them learn more. Any assessment that consistently underestimates a child's ability isn't doing its job — regardless of how "standard" it is.

Tools like Monster Math take a game-based, adaptive approach that adjusts to each child's level in real time — letting kids demonstrate what they know through play rather than high-pressure testing. It's one example of how the research-backed alternatives described here are already making their way into everyday practice.

FAQs

Do standard math tests work for any neurodivergent children? They can provide useful data points, but research consistently shows they underestimate ability for children with ADHD, autism, dyscalculia, and dyslexia. The key issue is that these tests measure processing speed, working memory, and anxiety management alongside (or instead of) mathematical understanding. A low score doesn't mean a child lacks math ability — it means the test format may not have captured it.

My child gets extra time on tests. Isn't that enough? Unfortunately, research suggests that extended time alone doesn't specifically close the gap for students with ADHD — both ADHD and non-ADHD students benefit equally from more time (Lewandowski et al., 2007). A broader systematic review found similar results: most standard accommodations don't show benefits specific to ADHD (Lovett & Nelson, 2021). Accommodations are still worth having, but they work best when paired with fundamentally different assessment approaches.

What is dynamic assessment, and how do I ask for it? Dynamic assessment measures how a child learns, not just what they currently know. Instead of a pass/fail snapshot, it involves teaching a concept and observing how the child responds to instruction. You can ask your child's school psychologist or special education team about incorporating dynamic assessment into evaluations. It's particularly valuable for children who may perform poorly on static tests due to anxiety, processing differences, or limited exposure.

Are game-based assessments taken seriously by schools? Game-based assessment is a growing area of research with strong evidence for reducing anxiety and capturing abilities standard tests miss. While most schools still rely on traditional assessments for formal evaluations, game-based tools are increasingly used for informal progress monitoring and instructional planning. As a parent, you can use these tools at home to build a fuller picture of your child's abilities and share that information with their school team.

My child is autistic and scores well on some math topics but terribly on others. Is that normal? Yes — this is exactly the "spiky profile" researchers describe. Studies show that 90% of high-functioning autistic children show significant gaps between IQ and academic achievement across different domains (Estes et al., 2011). Some autistic children actually outperform neurotypical peers on numerical reasoning while struggling with basic computation (Iuculano et al., 2014). Standard tests can't capture this unevenness, which is why multi-method assessment is so important.

How do I bring this up with my child's school without sounding confrontational? Frame it around shared goals. You might say: "I've noticed a gap between what my child can do at home and what their test scores show. I'd love to explore whether alternative assessment approaches — like curriculum-based measurement or dynamic assessment — might give us a more accurate picture of their strengths and needs." Coming prepared with specific research (like the studies cited here) can help move the conversation from opinion to evidence.

References

Allsopp, D. H., Kyger, M. M., Lovin, L., Gerretson, H., Carson, K. L., & Ray, S. (2008). Mathematics dynamic assessment: Informal assessment that responds to the needs of struggling learners in mathematics. TEACHING Exceptional Children, 40(3), 6–16. ERIC

Ashburner, J., Ziviani, J., & Rodger, S. (2008). Sensory processing and classroom emotional, behavioral, and educational outcomes in children with autism spectrum disorder. American Journal of Occupational Therapy, 62(5), 564–573. PubMed

Butera, C., Ring, P., Sideris, J., Jayashankar, A., Engel, C., Stein Duker, L. I., Shahamiri, E., & Bodfish, J. W. (2020). Impact of sensory processing on school performance outcomes in high functioning individuals with autism spectrum disorder. Mind, Brain, and Education, 14(3), 243–254. PMC

Caviola, S., Toffalini, E., Giofrè, D., Mercader Ruiz, J., Szűcs, D., & Mammarella, I. C. (2022). Math performance and academic anxiety forms, from sociodemographic to cognitive aspects: A meta-analysis on 906,311 participants. Educational Psychology Review, 34, 363–399. Springer

Ebenbeck, N., & Gebhardt, M. (2024). Differential performance of computerized adaptive testing in students with and without disabilities – A simulation study. Journal of Special Education Technology, 39(4). SAGE Journals

Estes, A., Rivera, V., Bryan, M., Cali, P., & Dawson, G. (2011). Discrepancies between academic achievement and intellectual ability in higher-functioning school-aged children with autism spectrum disorder. Journal of Autism and Developmental Disorders, 41, 1044–1052. PubMed

Fuchs, L. S., Compton, D. L., Fuchs, D., Hollenbeck, K. N., Craddock, C. F., & Hamlett, C. L. (2008). Dynamic assessment of algebraic learning in predicting third graders' development of mathematical problem solving. Journal of Educational Psychology, 100(4), 829–850. ERIC

Fuchs, L. S., Compton, D. L., Fuchs, D., Hollenbeck, K. N., Hamlett, C. L., & Seethaler, P. M. (2011). Two-stage screening for math problem-solving difficulty using dynamic assessment of algebraic learning. Journal of Learning Disabilities, 44(4), 372–380. PMC

Iuculano, T., Rosenberg-Lee, M., Supekar, K., Lynch, C. J., Khouzam, A., Phillips, J., Uddin, L. Q., & Menon, V. (2014). Brain organization underlying superior mathematical abilities in children with autism. Biological Psychiatry, 75(3), 223–230. PubMed

Lee, C. S. C. (2024). Processing speed deficit and its relationship with math fluency in children with attention-deficit/hyperactivity disorder. Journal of Attention Disorders, 28(4), 571–581. SAGE Journals

Lewandowski, L. J., Lovett, B. J., Parolin, R., Gordon, M., & Codding, R. S. (2007). Extended time accommodations and the mathematics performance of students with and without ADHD. Journal of Psychoeducational Assessment, 25(1), 17–28. SAGE Journals

Lievore, R., Caviola, S., & Mammarella, I. C. (2025). Children with and without dyscalculia: How mathematics anxiety and executive functions may (or may not) affect mental calculation. Learning and Individual Differences, 119, 102610. ScienceDirect

Lovett, B. J., & Nelson, J. M. (2021). Systematic review: Educational accommodations for children and adolescents with attention-deficit/hyperactivity disorder. Journal of the American Academy of Child & Adolescent Psychiatry, 60(4), 448–457. ScienceDirect

Mammarella, I. C., Hill, F., Devine, A., Caviola, S., & Szűcs, D. (2015). Math anxiety and developmental dyscalculia: A study on working memory processes. Journal of Clinical and Experimental Neuropsychology, 37(8), 878–887. PubMed

Menon, V. (2016). Working memory in children's math learning and its disruption in dyscalculia. Current Opinion in Behavioral Sciences, 10, 125–132. Stanford PDF

Nieminen, J. H., & Pesonen, H. V. (2020). Taking universal design back to its roots: Perspectives on accessibility and identity in undergraduate mathematics. Education Sciences, 10(1), 12. MDPI

Passolunghi, M. C., & Siegel, L. S. (2004). Working memory and access to numerical information in children with disability in mathematics. Journal of Experimental Child Psychology, 88, 348–367. ResearchGate PDF

Passolunghi, M. C., Caviola, S., De Agostini, R., Perin, C., & Mammarella, I. C. (2016). Mathematics anxiety, working memory, and mathematics performance in secondary-school children. Frontiers in Psychology, 7, 42. Frontiers

Pritchard, A. E., Koriakin, T., Carey, L., Bellows, A., Jacobson, L., & Mahone, E. M. (2016). Academic testing accommodations for ADHD: Do they help? Learning Disabilities: A Multidisciplinary Journal, 21(2), 67–78. PMC

Rubinsten, O., & Tannock, R. (2010). Mathematics anxiety in children with developmental dyscalculia. Behavioral and Brain Functions, 6, 46. PMC

Stecker, P. M., Fuchs, L. S., & Fuchs, D. (2005). Using curriculum-based measurement to improve student achievement: Review of research. Psychology in the Schools, 42(8), 795–819. Wiley Online Library

Stone, E., & Davey, T. (2011). Computer-adaptive testing for students with disabilities: A review of the literature. ETS Research Report Series, 2011(1), i–32. ERIC

Tlili, A., Denden, M., Huang, R., Padilla-Zea, N., Sun, T., & Burgos, D. (2022). Game-based learning for learners with disabilities—What is next? A systematic literature review from the activity theory perspective. Frontiers in Psychology, 12, 814691. PMC

Wei, X. (2025). Universal design element utilization and mathematics performance: Implications for diverse student populations. Journal of Special Education Technology (advance online publication). SAGE Journals

Wilson, A. J., Revkin, S. K., Cohen, D., Cohen, L., & Dehaene, S. (2006). An open trial assessment of "The Number Race," an adaptive computer game for remediation of dyscalculia. Behavioral and Brain Functions, 2, 20. PMC

Comments

Your comment has been submitted